What turpentine teaches us about artificial intelligence

The real question about artificial intelligence is less how fast it gets you round the track and more what you built before you added the fuel.

Stand close enough to a Vermeer and something strange happens. The wall behind the milkmaid, that flat, pale expanse you’d expect a lesser painter to dispatch in minutes, begins to move. Lean in and you can see it: almost imperceptible gradations in the hue, shifts so subtle they shouldn’t register as shifts at all. They register as light. As air. As the sense that the room breathes.

What appears as genius is, more precisely, process. Vermeer worked slowly in part because he had to. Oil paint mixed with turpentine stays workable. It can be returned to, adjusted, built up across sessions without leaving the seam. The painter can linger. And it is in that lingering, in the extended engagement with the surface and the sustained inquiry into what is actually there, that the work earns its depth.

We are building AI products the way a bad student learns to paint. Fluently, quickly and at the wrong scale.

Fuel and what we do with it

The dominant framing for AI right now is accelerant. AI as fuel. It is not a bad metaphor, for it captures something true about what the technology does to the speed of work. The problem is not the metaphor. It is what most builders have done with it.

When AI is the fuel, the logical question becomes: what is it fuelling? What is the thing that already has integrity, form and purpose, to which you are now applying this extraordinary energy? That question is largely not being asked. Instead, AI has become the substrate: the building block, the material, the product itself. The capability is the offer. The prompt-to-output pipeline is the entire architecture.

Not all fuel is the same. The jump from crude oil to kerosene to racing fuel is not simply a jump in speed. It is a jump in precision, in control, in what the engine is actually designed to do and for whom. The interesting question about AI as fuel is not how fast it gets you round the track. It is what kind of performance it enables and whether the thing you have built is designed for that kind of performance at all, or whether you have simply bolted an engine to a frame and called it a vehicle.

Look at the current landscape of image generation tools: Midjourney, Higgsfield, Kimi and the rest. Each is a remarkable feat of engineering. Each will get you from prompt to output at speeds that would have seemed implausible three years ago. They are also largely interchangeable and that interchangeability is not incidental. When the AI capability is the product, differentiation becomes nearly impossible. The fuel is the same, the track is the same and the lap times are converging. There is nothing underneath the capability to distinguish one from another, because nothing was built there first.

The layer that goes missing

There is a particular kind of painter who emerged in the years following the widespread adoption of photography. Academically trained, technically formidable, able to produce a portrait that stopped you from a distance. Walk closer and something begins to absent itself. The surface is correct. The likeness is there. But the quality of inquiry, the sense that a human being spent time in genuine and uncertain relationship with the material, is not. This is less a question of skill than of process. The painter who knows how to make something look right, at speed, from accumulated pattern recognition, has skipped the stage in which the work discovers what it actually is. The stage that requires slow fuel.

Turpentine is unusual among the materials of painting because it functions in both directions. It is an accelerant and a retardant. Add it to your paint and you can work faster, thin your material, cover ground. But turpentine also extends working time. It keeps the surface open. It allows a painter to return to an area, to continue the conversation with the material, to push an argument further than a single session would otherwise permit.

Caravaggio, working in Malta in the early 1600s, used this kind of extended surface engagement to produce the almost violent transitions between light and dark that still read as physical force four centuries later. That chiaroscuro is less a style choice than the residue of a process, of a practitioner who stayed with the problem until it told him something.

Gerhard Richter trained in Soviet realist painting, a discipline that demands accountable, deliberate mark-making. Stand in front of his later work and you can see individual brushstrokes, each one a decision. He took that discipline and turned it on the blur of mass media images. The result is work that understands photography from the inside rather than from observation. The slow process is producing the fast insight.

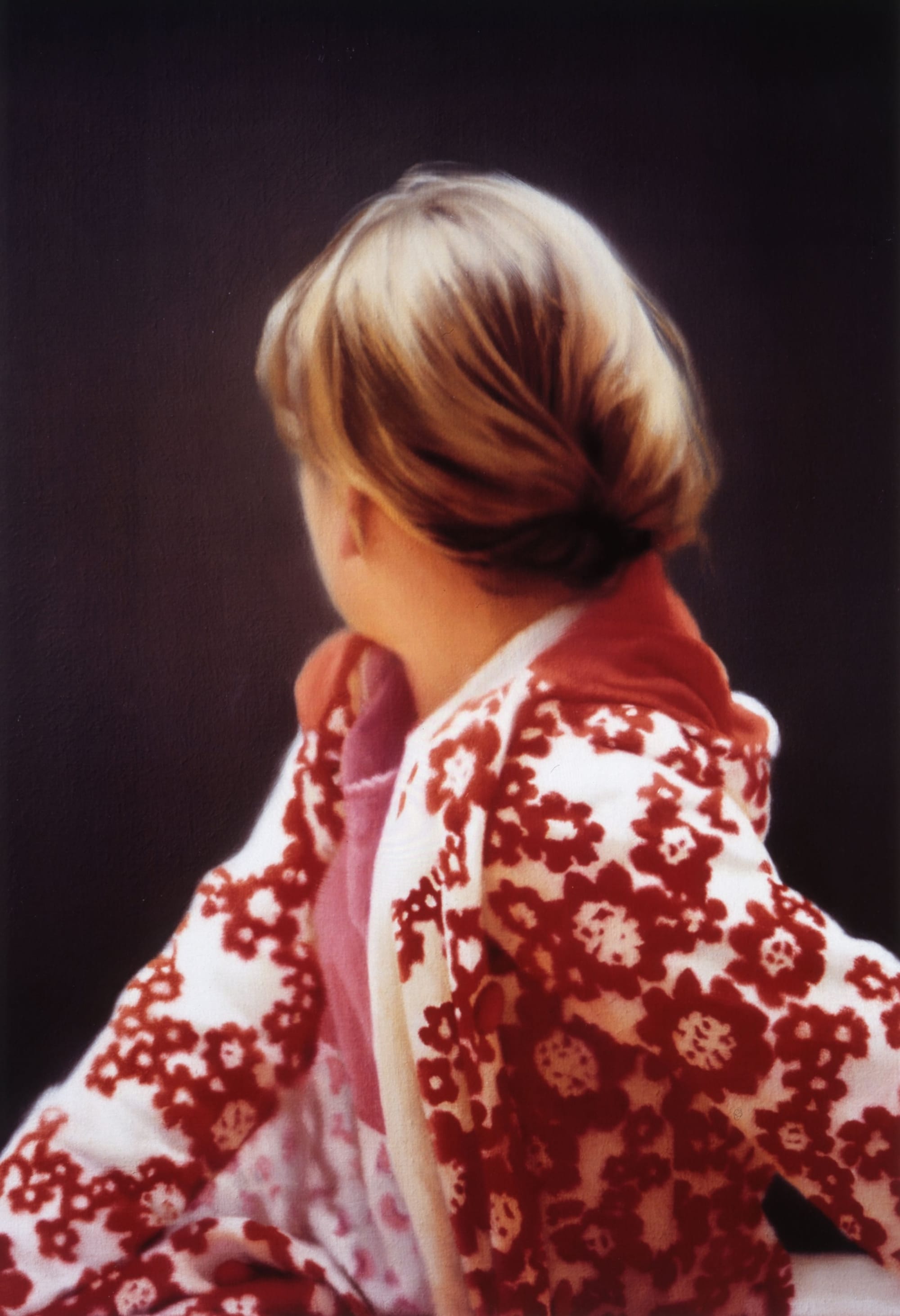

Betty

In 1988, Richter painted his daughter from a photograph. Betty is posed the way Ingres posed his bather at Valpincon in 1808: turned away, face withheld. The subject refuses the gaze of the onlooker. It is an odd choice for a portrait, whose entire function is to frame a face for beholding. Up close, the painting reveals a sublime texture, layers of paint carrying the residue of extended looking, of a painter who stayed with the surface until it told him something about what it means to be seen.

I fed the painting into an image generation model with a precise prompt: to see what we can discover when she turns to face the camera.

The outcome is of course not Betty. We know it can’t be. But something happened in that turn that made the experiment worth running. We catch a glimpse behind the scene, a woman with similar hair, similar coat, similar stance, turning briefly towards us. Even knowing it is imaginary, we get a sense of the moment. A movement. An inflection. A question about what the original withheld and why.

This is what it looks like to use AI to linger on something. Rather than generating images it allows us to explore existing ones. To ask a slow question, what is behind the stance, what does the turned face know and to use the model’s capability not as an accelerant but as a tool for extended inquiry. The process produced something the prompt-to-output model never would: a closer reading of intent.

Intent is the dimension that most AI product development is not yet asking about. Understanding what the original work was reaching for and using the technology to examine that more closely rather than to produce something new, is a fundamentally different relationship with the material.

The problem with the screaming gorilla

The test I have come to apply to AI products is a simple one. Could this product exist without AI, or is AI constitutive of its value? Not: does it use AI to do something faster or cheaper? But: is there a genuine human difficulty here, located in a specific process, that this approach uniquely resolves?

Very few current products pass that test. Most are doing something real, in the same way that a technically proficient image of a screaming gorilla is doing something real. The capability is genuine. The output is technically competent. What is missing is the layer between prompt and output where a practitioner’s judgement, their relationship with the material, their capacity to linger and push and adjust, could have lived.

The social media content machine is the clearest symptom of this. The problem was never that creating content was difficult. The problem was always that making it matter was difficult. Acceleration does not touch that problem. It accelerates past it.

Adding turps to the process

A client came to me wanting to build a tool for speechwriters. The initial instinct, the natural AI product instinct, was to build something that could generate better speeches faster. A more capable engine for the same journey.

The approach I took was different. Rather than starting from the output and working backwards, I started from the process. What does a speechwriter actually do? Where in that process does control matter more than speed? Where does the quality of the work depend on being able to linger, to stay with a structural problem, to test a reversal, to feel whether the setup is earning the payoff that follows it?

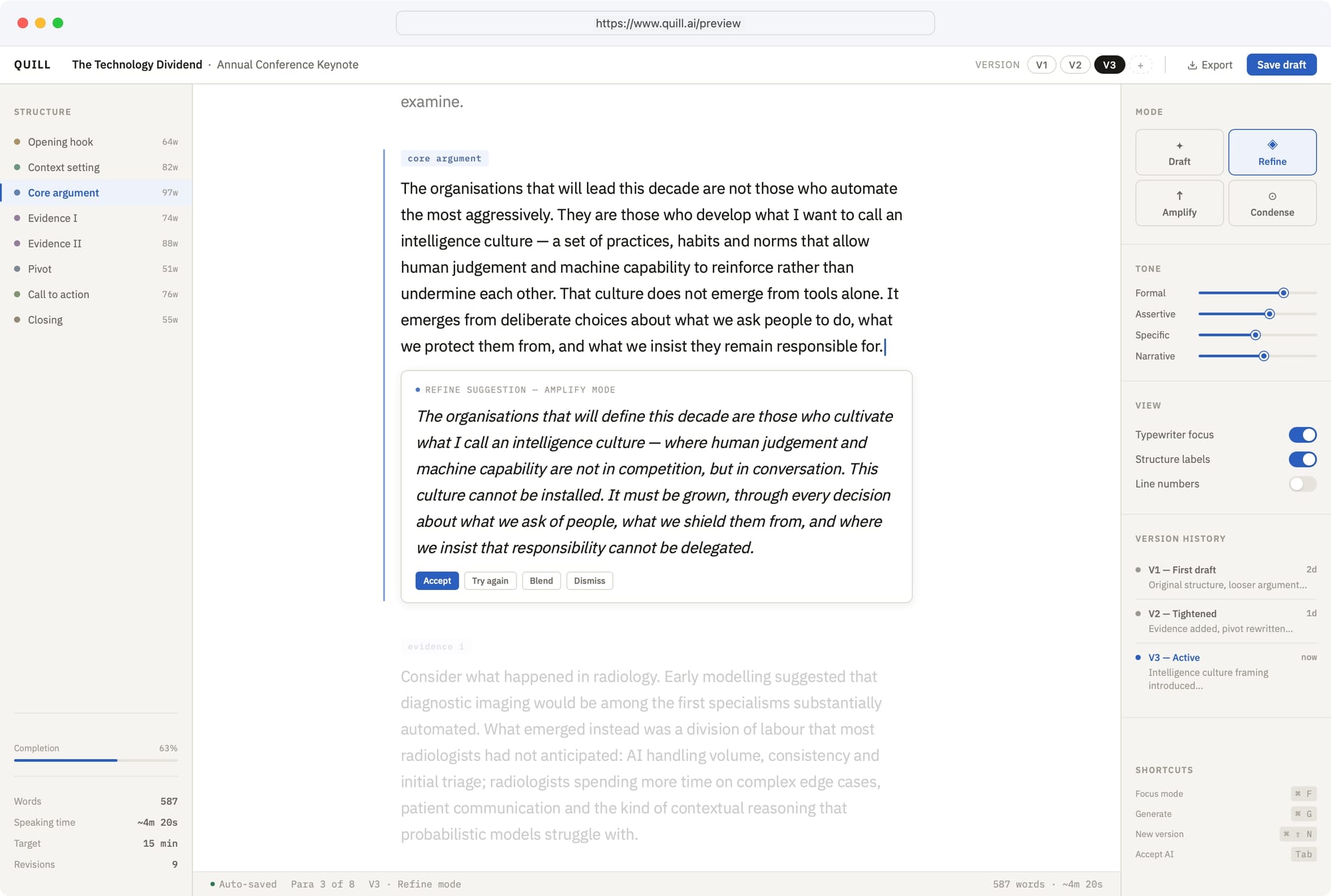

What emerged was not a faster tool. It was a different class of tool. One that understood the stages of composition, structure, argument, rhythm, register, as distinct moments requiring distinct kinds of attention. One that allowed the author to step back, to ask the question behind the question, to add more turps to the process rather than simply burning hotter.

The left panel breaks the speech into named stages: hook, argument, evidence, pivot, close, each tracked by word count and completion. The right panel offers tone controls, sliders for Formal, Assertive, Specific, Narrative. Rather than having a ‘generate’ button there are handles. The version history shows three sessions across two days, each with a named intention. This is what a tool looks like when it is designed around process rather than output.

The tool could still generate content. At its heart, the capability was the same. But the relationship between the author and the material had changed entirely. Instead of a one-shot prompt producing an output to be accepted or rejected, the writer had substrate. Friction in the places where friction produces precision and speed in the places where speed is genuinely what is needed. The ‘Blend’ option sitting alongside ‘Accept’ and ‘Dismiss’ is not a minor feature. It is the painter’s vocabulary, built into the interface.What relevance actually requires

There is a reason that tools like Markdown have endured and evolved where others have not. They did not emerge from a product team specifying capabilities. They emerged from a sustained dialogue between writers and their actual relationship with the material they were making. They embody a quality of process, not just a quality of output.

This is the question that most AI development is currently not asking. Not: what can we build with this capability? But: where does a genuine human difficulty exist, located in a specific kind of process, that this approach uniquely resolves?

The painter who produces technically correct work at thumbnail scale and reveals nothing on close inspection has answered the wrong question. They have asked how to make something look right. The question that produces work worth standing in front of is harder and slower: what am I actually trying to understand about this surface and what does that require of me?

Vermeer’s wall is still breathing 350 years later because he stayed with it long enough to find out what it was. The tools we build from AI capability will matter and continue to matter, in proportion to whether the people who built them stayed with the problem long enough to find out what it actually was.

Speed gets you round the track. That is not nothing. But it is not enough either. The products that will still be relevant in five years are the ones being built right now by people who understand the difference between an accelerant and a process and who thought carefully about what they were building before they decided what to fuel it with.

Not faster. Better.

Work with me

If this framing resonates and you are sitting with a problem that keeps resisting resolution, I offer a focused discovery conversation to examine it properly. Ninety minutes. €450. Video call.

Tom Flemming is a strategic practitioner working at the intersection of research, service design and innovation. Current work includes Hyperplace, an infrastructure protocol for place-based intelligence and advisory engagements with organisations navigating the gap between what a service is designed to mean and what a person is ready to encounter.